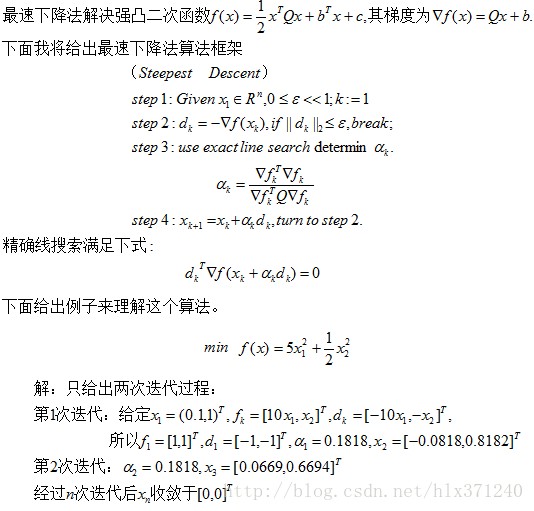

上两节讲了信赖域法+狗腿法,其中第二节中的(“强凹凸二次函数”改为“强凸二次函数”),这一节将会讲最速下降法SD,最速下降法在模式识别和机器学习中运用最为广泛,在Deep Learning中也应用了最速下降法,尤其在在卷积神经网络中,熟悉CNNs(convolutional neural networks )的人知道卷积神经网络大量用于图像识别与跟踪。利用最速下降法在反馈调节中调参,在CUDA-Convnet的卷积神经网络的程序中,我们需要手动调节训练的学习率,而最速下降法分别介绍了两种动态确定学习率的方法,第一种是inexact line search(非精确线搜索)。第二种是exact line search(精确线搜索)。这里我们主要利用exact line search确定下降的步长,也就是机器学习里所指的学习率。下面我将讲最速下降法。

mian.m

x=[0.1,1]'; Q=[10 0;0 1]; itera=10000; for i=1:itera d=-g1(x); a=(g1(x)'*g1(x))/(g1(x)'*Q*g1(x)); x=x+a*d; end

<span style="font-family: 'Times New Roman';font-size:18px;">g1.m </span>

function y = g1(x) y = [10*x(1), x(2)]'; end

下面介绍最速下降法在回归分析的运用。依旧用第二节给出的问题,给出了47个训练样本,训练样本的y值为房子的价格,x属性有2个,一个是房子的大小,另一个是房子卧室的个数。需要通过这些训练数据来学习系统的函数。

得到的结果如下图所示:

SD.m

x = load('ex3x.dat'); y = load('ex3y.dat'); trustRegionBound = 1000; x = [ones(size(x,1),1) x]; meanx = mean(x);%求均值 sigmax = std(x);%求标准偏差 x(:,2) = (x(:,2)-meanx(2))./sigmax(2); x(:,3) = (x(:,3)-meanx(3))./sigmax(3); itera_num = 1000; %尝试的迭代次数 sample_num = size(x,1); %训练样本的次数 figure alpha = [0.01, 0.03, 0.1, 0.3, 1, 1.3];%因为差不多是选取每个3倍的学习率来测试,所以直接枚举出来 plotstyle = {'b', 'r', 'g', 'k', 'b--', 'r--'}; theta_grad_descent = zeros(size(x(1,:))); %% 信赖域+狗腿法 theta = zeros(size(x,2),1); %theta的初始值赋值为0 Jtheta = zeros(itera_num, 1); for i = 1:itera_num %计算出某个学习速率alpha下迭代itera_num次数后的参数 Jtheta(i) = (1/(2*sample_num)).*(x*theta-y)'*(x*theta-y);%Jtheta是个行向量 grad = (1/sample_num).*x'*(x*theta-y); B=x'*x; du = -grad' * grad * grad / (grad' * B * grad); dB = -B^-1 * grad; a = 2; if du'*du > trustRegionBound*trustRegionBound; a = trustRegionBound / sqrt((du'*du)); else if dB'*dB > trustRegionBound*trustRegionBound a = sqrt((trustRegionBound*trustRegionBound - du'*du) / ((dB-du)'*(dB-du))) + 1; end end if a < 1 d = a * du; else d = du + (a - 1) * (dB - du); end Jtheta1(i)=(1/(2*sample_num)).*(x*(theta+d)-y)'*(x*(theta+d)-y); p = (Jtheta(i)-Jtheta1(i))/(-grad'*d-1/2*d'*B*d); if p > 0.75 && sqrt(abs(d'*d) - trustRegionBound) < 0.001 trustRegionBound = min(2 * trustRegionBound, 10000); else if p < 0.25 trustRegionBound = sqrt(abs(d'*d)) * 0.25; end end if p > 0%q(zeros(2,1),x) > q(d, x) theta = theta + d; end end K(1)=Jtheta(1000) plot(0:350, Jtheta(1:351),'k--','LineWidth', 2)%此处一定要通过char函数来转换 hold on %% 固定学习率法 theta_grad_descent = zeros(size(x(1,:))); for alpha_i = 1:length(alpha) %尝试看哪个学习速率最好 theta = zeros(size(x,2),1); %theta的初始值赋值为0 Jtheta = zeros(itera_num, 1); for i = 1:itera_num %计算出某个学习速率alpha下迭代itera_num次数后的参数 Jtheta(i) = (1/(2*sample_num)).*(x*theta-y)'*(x*theta-y);%Jtheta是个行向量 grad = (1/sample_num).*x'*(x*theta-y); theta = theta - alpha(alpha_i).*grad; end K(alpha_i+1)=Jtheta(1000); plot(0:350, Jtheta(1:351),char(plotstyle(alpha_i)),'LineWidth', 2)%此处一定要通过char函数来转换 hold on end %% %% SD算法 theta = zeros(size(x,2),1); %theta的初始值赋值为0 Jtheta = zeros(itera_num, 1); for i = 1:itera_num %计算出某个学习速率alpha下迭代itera_num次数后的参数 Jtheta(i) = (1/(2*sample_num)).*(x*theta-y)'*(x*theta-y);%Jtheta是个行向量 grad = (1/sample_num).*x'*(x*theta-y); Q=x'*x; d=-grad; a=(grad'*grad)/(grad'*Q*grad); theta = theta + a*d; end K(1)=Jtheta(1000) plot(0:350, Jtheta(1:351),'b--','LineWidth', 2); hold on %% legend('Trust Region with DogLeg','0.01','0.03','0.1','0.3','1','1.3','Steepest Descent'); xlabel('Number of iterations') ylabel('Cost function') figure plot(1:7,K,'b-','LineWidth', 2);